- Our Solutions

- Our Services

- ASSESS Cyber Risk and Maturity Assessment

- AWARE Cyber Security Awareness Service

- TRiM 3rd Party Risk Management

- DataG Data Governance Service

- iDAC Identity and Access Management Services

- KVKK+ Personal Data Protection Law Compliance Services

- Fraud Risk Management Services

- Cloud Services and DevOps Solutions

- About Us

- Blog

Türkçe

When organizing its Information Security Awareness Program, it is natural for an organization to compare its overall security posture with similar institutions and similar programs.

In this article, we aim to discuss the things you should pay attention to when comparing your measurement results with institutions in similar sectors, while determining the measurement criteria that you can use in both external and internal comparisons when evaluating the effectiveness of your awareness program.

So, what are the criteria? And how many variables need to be similar for the comparison to be valid or even make sense? According to Bernard Marr & Co, benchmarks are reference points you use to compare your performance with the performance of others, often across departments within the organization or external competitors. Business processes, procedures and performance analytics are compared considering best practices and statistics from similar organizations.

As it is known, phishing simulations are used by many companies from various industries as an important cyber training tactic that teaches them to better identify and stop phishing attacks in which they use deception methods to collect sensitive and personal information. Phishing simulations generally measure the rate of spam (clicks, data entry, etc.) and the resulting report rate. While there are variations of comparisons, phishing falls under performance benchmarking, which involves performance metrics and can include not only comparison with other companies or competitors in similar industries, but also global comparison regardless of industry, or even internal comparison within your own company, such as comparing different departments, regions, or business units.

Most Information Security Officers are interested in how awareness program metrics compare to similar organizations. But here, the performance comparison can stand out much more. While identifying comparable areas of improvement by industry is key, it is important to track multiple variables when using phishing simulations, and results are the primary driver.

Here are some variables to consider when evaluating the analytical details of the results of phishing simulations:

- The sample size included in the simulation as a representative of the institution,

- History and length of the information security awareness program,

- Difficulty of the simulation and number of indicators,

- Experience with simulation content (link/attachment/credential request),

- The scenario used for the simulation and how relevant it may be to all participants,

- When it was sent (day/time by location),

- Reporting ease/options,

- Availability and diversity of training and awareness materials,

- “Time Spent” in the organization or in the onboard adaptation process (for example, the intense flow of new employees may be effective),

- Relative demographics of employees within the scope of the simulation,

- And the overall maturity of the Security Awareness Program or efforts.

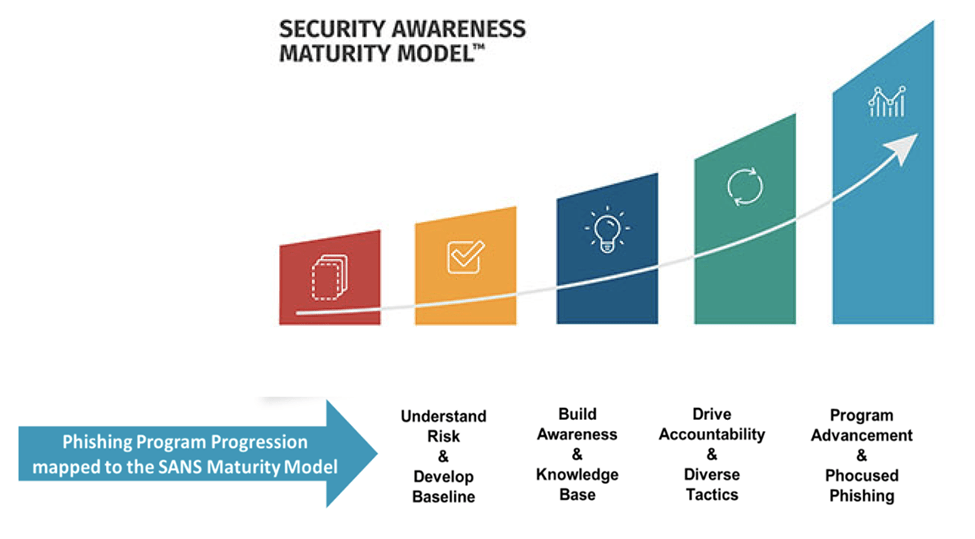

For example, if your phishing program falls at the upper end of the SANS Maturity Model , where you have previously promoted cyber awareness tactics and have experienced long-term sustainability and culture change , your phishing assessment results – even with a similar phishing simulation – will be significantly higher than that of a similar organization whose program falls below the Compliance Focused level. It may be different. Employees below the Compliance Focused level have less time dedicated to training and using safe cyber awareness tactics.

Another variable to consider is the difficulty of the simulations. Statistics will also be more valid if simulations across various businesses or organizations are the same or similar. If the difficulty levels of the simulations you use for comparison differ, the comparison may be skewed.

Therefore, while benchmarking is important and can be used to increase leadership support, care should be taken to ensure that you are comparing apples to apples wherever possible. Benchmarking can only be used as a valuable evaluation tool if you have the capacity to consider all factors and variables. Otherwise, placing too much emphasis on comparison statistics instead of more effective metrics such as report rate and click-through rate can lead to incorrect behavior.

In the following article, we will discuss the topic of “effective use of benchmarks in evaluating your phishing program.” Stay tuned for our future articles about benchmarking in your own organization.

Source: https://www.sans.org/blog/how-to-use-phishing-benchmarks-effectively-to-assess-your-program/